AI Operations

Turning AI Experiments into Enterprise-Grade Systems.

At Daksario, we specialize in operationalizing AI—not just building models, but ensuring they are secure, reliable, scalable, and compliant in real-world environments. Our work spans the full AI lifecycle, from experimentation to production and long-term governance.

Our AI Operations Capabilities

-

MLOps

Model lifecycle management

CI/CD for machine learning

Feature stores & model registries

Cloud & hybrid deployments

-

LLMOps

Prompt engineering & versioning

Evaluation frameworks for LLM outputs

Safety, bias, and hallucination controls

Cost governance for GenAI workloads

-

AI Governance & Responsible AI

Model explainability & documentation

Compliance-ready audit trails

Risk assessments for regulated industries

Secure AI architecture design

-

AI Strategy & Enterprise Transformation

AI Readiness & Maturity Assessment

Enterprise AI Roadmapping

Use Case Prioritization & ROI Modeling

AI Operating Model Design

Build vs Buy vs Partner Advisory

AI Investment Governance Frameworks

Challenge

Teams were deploying machine learning models inconsistently across environments, leading to:

High deployment risk

Poor model traceability

Regulatory and audit exposure

What We Delivered

End-to-end MLOps pipeline (training → validation → deployment)

Automated CI/CD for ML models

Centralized model registry with versioning and lineage

Environment parity across dev, staging, and production

Role-based access and audit logs

Impact

60–70% reduction in deployment time

Audit-ready model governance

Consistent performance across environments

Enterprise MLOps Platform (Healthcare & Finance)

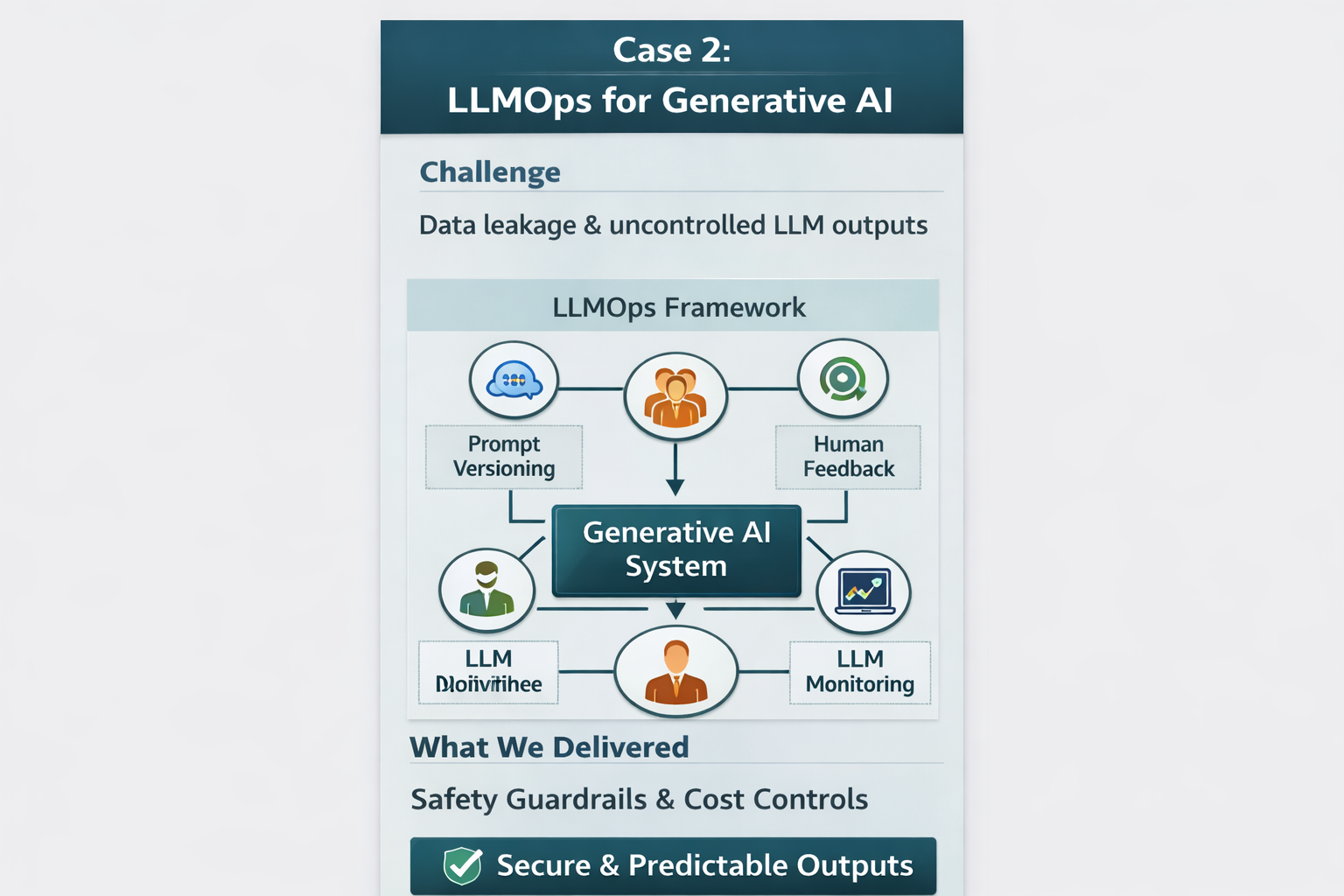

Challenge

Rapid adoption of large language models created risks around:

Data leakage

Hallucinations

Cost overruns

Lack of monitoring and controls

What We Delivered

LLMOps framework for prompt versioning and evaluation

Guardrails for sensitive data and PII

Usage-based cost monitoring and optimization

Human-in-the-loop validation workflows

Observability for latency, accuracy, and drift

Impact

Predictable GenAI behavior in production

Controlled and explainable outputs

40%+ reduction in inference cost

LLMOps for Generative AI Applications